Cocreative performances in analog-digital interstices

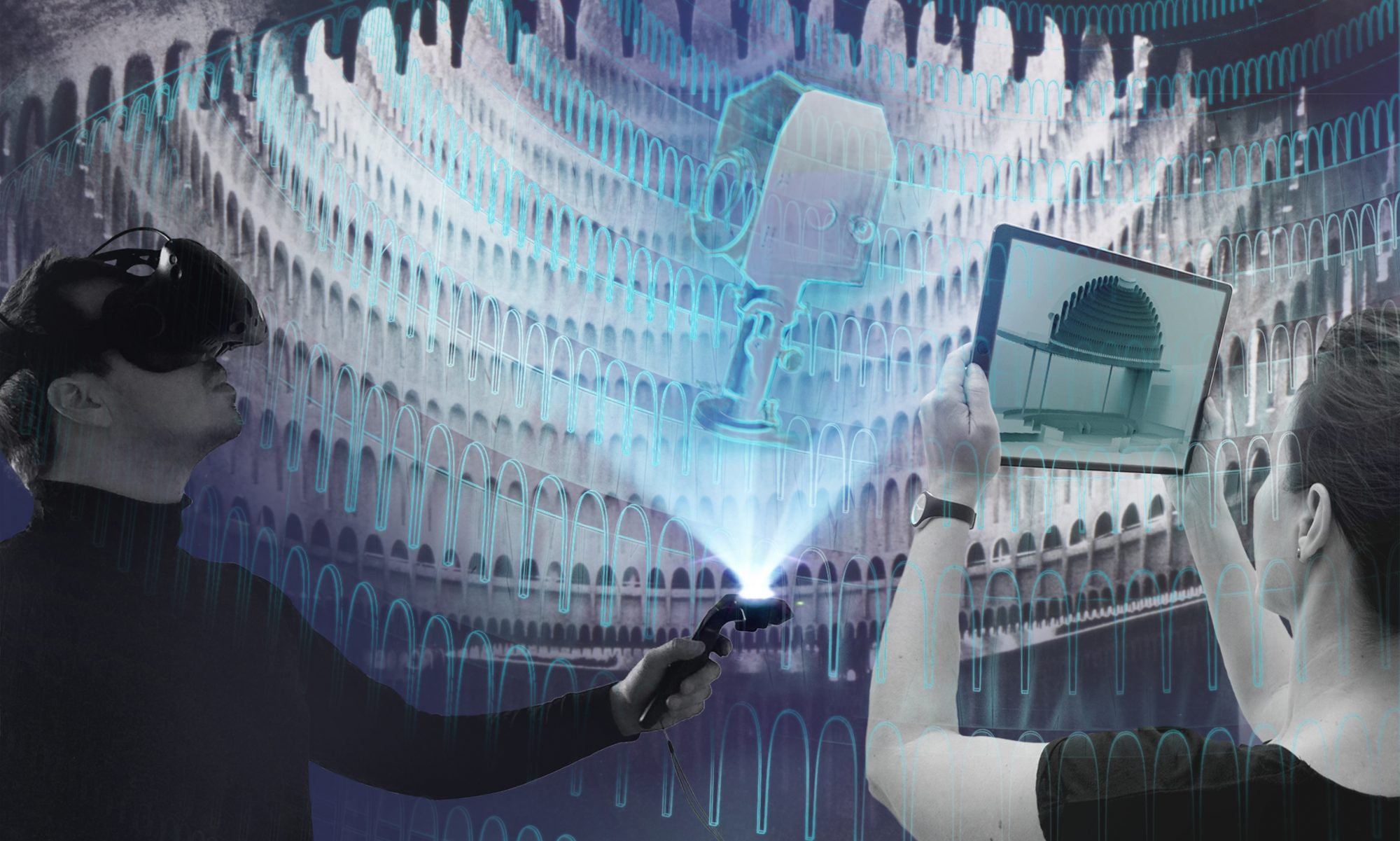

The central field of investigation in this subproject is the relationship between physical reality and virtual space and interaction processes between spectators and musicians/performers. With the development and realization of the VR performance “Spatial Encounters” we explored the extent to which the linking of a real/physical space with a digital/immaterial space can be used as a design tool, thus enabling new spaces of experience. The aim was to explore cocreation processes in the context of artistic stagings and performance spaces, with a focus on musical experience.

A web-based XR application we developed, controlled by a local server, serves as the software foundation for the performance. Mobile VR glasses (e.g. Meta Quest 2) can access a shared virtual space via the integrated browser. This is where the live performance takes place: One or more musicians, a visual jockey as “Master of Virtual Scenography” and up to nine visitors meet in an equal dialogue. On an open space of about 150 square meters, the audience is immersed with mobile VR glasses into a virtual scenery, which is played, designed and experienced together during the following 20 minutes. The users move freely in these digital landscapes and generate visual effects and sculptures through their encounters and spatial relationships.

Open source code on Github

Server: https://github.com/digitaldthg/Web-XR-Spatial-Encounters-Server

Client: https://github.com/digitaldthg/Web-XR-Spatial-Encounters-Client

Link to the project

https://digital.dthg.de/en/projects/hybrid-real-stages/

WHAT HAPPENS NEXT?

The experimental work with the developed instruments continues. These new performative forms of work can be explored in other performance contexts and with different focuses. At the beginning of 2022, for example, we held a workshop at the Master‘s programme in Stage Design and Scenic Space at the Technische Universität Berlin to explore the role that scenographs can play in this set-up. What are the potentials and limits of such a set of design rules? What influence does the design of the visual scenery have on the experience? How does one design for virtual space? The students of the Technical University of Berlin designed a series of virtual scenes that were immediately tested live on a 1:1 scale on the same day and demonstrated the strengths of this tool.

In the next step, it would be logical to further develop the tool in collaboration with other disciplines, such as dancers and choreographers, actors and puppeteers. On the musical level, an expansion is also conceivable, both into other genres such as jazz or contemporary music, but also with larger ensembles or a whole orchestra. The setting also lends itself to cultural education work with children and young people or use in the sense of community music.

These initial approaches for possible new contexts result in new requirements for the software: in our view, additional features include a simplification of the user interface, the possibility of location-independent participation via the internet, the integration of audio streams, further interaction possibilities for the audience and a spectator mode for passive visitors.